Getting Started Guide¶

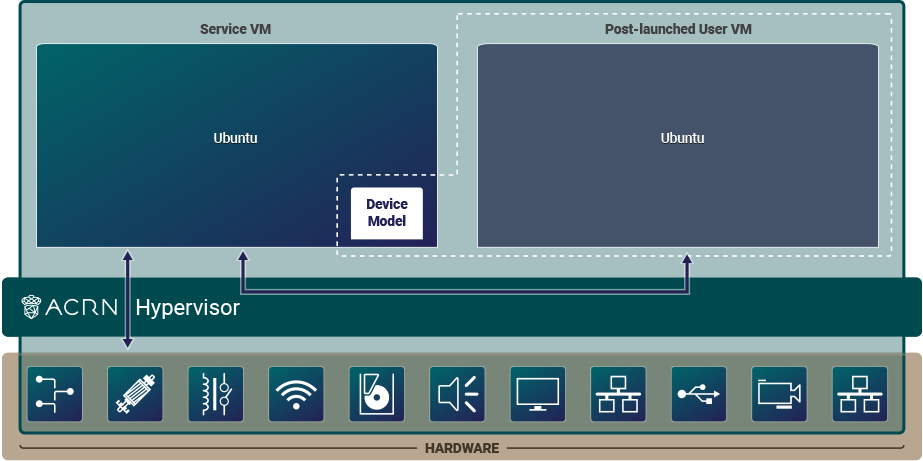

This guide will help you get started with ACRN. We’ll show how to prepare a build environment on your development computer. Then we’ll walk through the steps to set up a simple ACRN configuration on a target system. The configuration is an ACRN shared scenario and consists of an ACRN hypervisor, Service VM, and one post-launched User VM as illustrated in this figure:

Throughout this guide, you will be exposed to some of the tools, processes, and components of the ACRN project. Let’s get started.

Prerequisites¶

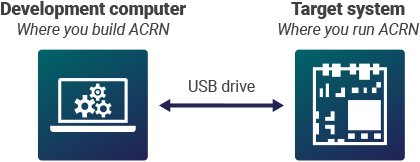

You will need two machines: a development computer and a target system. The development computer is where you configure and build ACRN and your application. The target system is where you deploy and run ACRN and your application.

Before you begin, make sure your machines have the following prerequisites:

Development computer:

Hardware specifications

A PC with Internet access (A fast system with multiple cores and 16GB memory or more will make the builds go faster.)

Software specifications

Ubuntu Desktop 22.04 LTS (ACRN development is not supported on Windows.)

Target system:

Hardware specifications

Target board (see Selecting Hardware)

Ubuntu Desktop 22.04 LTS bootable USB disk: download the latest Ubuntu Desktop 22.04 LTS ISO image and follow the Ubuntu documentation for creating the USB disk.

USB keyboard and mouse

Monitor

Ethernet cable and Internet access

Local storage device (NVMe or SATA drive, for example). We recommend having 40GB or more of free space.

Note

If you’re working behind a corporate firewall, you’ll likely need to

configure a proxy for accessing the Internet, if you haven’t done so already.

While some tools use the environment variables http_proxy and https_proxy to

get their proxy settings, some use their own configuration files, most

notably apt and git. If a proxy is needed and it’s not configured,

commands that access the Internet may time out and you may see errors such

as “unable to access …” or “couldn’t resolve host …”.

Prepare the Development Computer¶

To set up the ACRN build environment on the development computer:

On the development computer, run the following command to confirm that Ubuntu Desktop 22.04 is running:

cat /etc/os-releaseIf you have an older version, see Ubuntu documentation to install a new OS on the development computer.

Download the apt information database about all available package updates for your Ubuntu release. We’ll need it to get the tools and libraries used for ACRN builds:

sudo apt update

This next command upgrades packages already installed on your system with minor updates and security patches. This command is optional as there is a small risk that upgrading your system software can introduce unexpected issues:

sudo apt upgrade -y #optional command to upgrade system

Create a working directory:

mkdir -p ~/acrn-work

Install the necessary ACRN build tools:

sudo apt install -y gcc git make vim libssl-dev libpciaccess-dev uuid-dev \ libsystemd-dev libevent-dev libxml2-dev libxml2-utils libusb-1.0-0-dev \ python3 python3-pip libblkid-dev e2fslibs-dev \ pkg-config libnuma-dev libcjson-dev liblz4-tool flex bison \ xsltproc clang-format bc libpixman-1-dev libsdl2-dev libegl-dev \ libgles-dev libdrm-dev gnu-efi libelf-dev liburing-dev \ build-essential git-buildpackage devscripts dpkg-dev equivs lintian \ apt-utils pristine-tar dh-python acpica-tools python3-tqdm \ python3-elementpath python3-lxml python3-xmlschema python3-defusedxml

Get the ACRN hypervisor and ACRN kernel source code, and check out the current release branch.

cd ~/acrn-work git clone https://github.com/projectacrn/acrn-hypervisor.git cd acrn-hypervisor git checkout release_3.3 cd .. git clone https://github.com/projectacrn/acrn-kernel.git cd acrn-kernel git checkout release_3.3

Prepare the Target and Generate a Board Configuration File¶

In this step, you will use the Board Inspector to generate a board configuration file.

A board configuration file is an XML file that stores hardware-specific information extracted from the target system. The file is used to configure the ACRN hypervisor, because each hypervisor instance is specific to your target hardware.

Important

Before running the Board Inspector, you must set up your target hardware and BIOS exactly as you want it, including connecting all peripherals, configuring BIOS settings, and adding memory and PCI devices. For example, you must connect all USB devices you intend to access; otherwise, the Board Inspector will not detect these USB devices for passthrough. If you change the hardware or BIOS configuration, or add or remove USB devices, you must run the Board Inspector again to generate a new board configuration file.

Set Up the Target Hardware¶

To set up the target hardware environment:

Connect all USB devices, such as a mouse and keyboard.

Connect the monitor and power supply cable.

Connect the target system to the LAN with the Ethernet cable or wifi.

Example of a target system with cables connected:

Install OS on the Target¶

The target system needs Ubuntu Desktop 22.04 LTS to run the Board Inspector tool. You can read the full instructions to download, create a bootable USB drive, and Install Ubuntu desktop on the Ubuntu site. We’ll provide a summary here:

To install Ubuntu 22.04:

Insert the Ubuntu bootable USB disk into the target system.

Power on the target system, and select the USB disk as the boot device in the UEFI menu. Note that the USB disk label presented in the boot options depends on the brand/make of the USB drive. (You will need to configure the BIOS to boot off the USB device first, if that option isn’t available.)

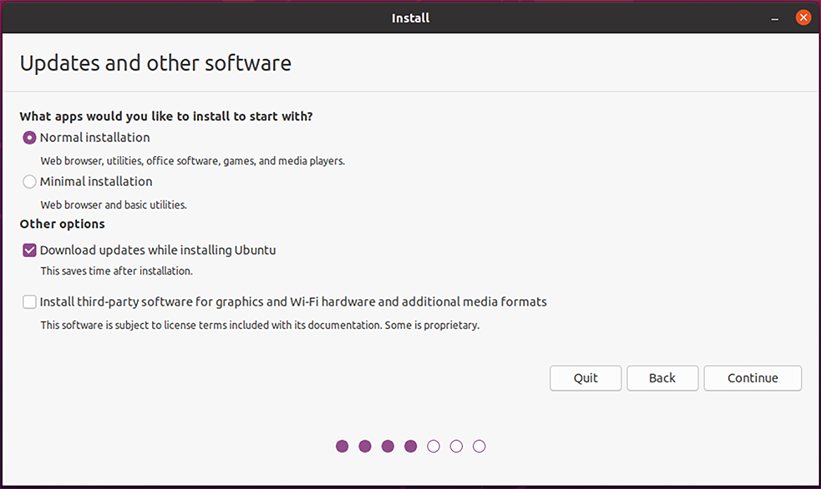

After selecting the language and keyboard layout, select the Normal installation and Download updates while installing Ubuntu (downloading updates requires the target to have an Internet connection).

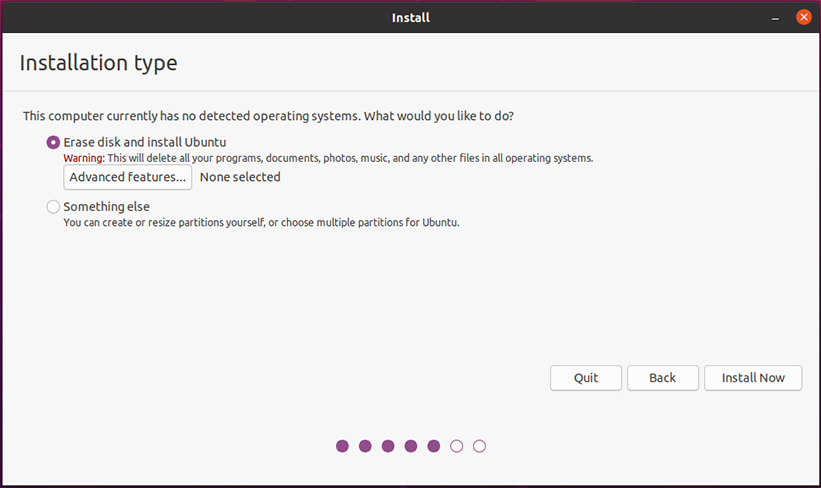

Use the check boxes to choose whether you’d like to install Ubuntu alongside another operating system (if one already exists), or delete your existing operating system and replace it with Ubuntu:

Complete the Ubuntu installation by choosing your geographical location, and creating your login details. We use

acrnas the username in this guide.If you choose a username other than

acrn, be sure to use that username in the command examples and paths shown in this guide.After the Ubuntu installation completes on the target and you reboot the system, don’t forget to update the system software (as Ubuntu recommends):

sudo apt update sudo apt upgrade -y

It’s convenient to use the network to transfer files between the development computer and target system, so we recommend installing the openssh-server package on the target system:

sudo apt install -y openssh-server

This command will install and start the ssh-server service on the target system. We’ll need to know the target system’s IP address to make a connection from the development computer, so find it now with this command:

hostname -I | cut -d ' ' -f 1

Make a working directory on the target system that we’ll use later:

mkdir -p ~/acrn-work

Configure Target BIOS Settings¶

Boot your target and enter the BIOS configuration editor.

Tip: When you are booting your target, you’ll see an option (quickly) to enter the BIOS configuration editor, typically by pressing F2 or DEL during the boot and before the GRUB menu (or Ubuntu login screen) appears. If you are not quick enough, you can still choose

UEFI settingsin the GRUB menu or just reboot the system to try again.Configure these BIOS settings:

Enable VMX (Virtual Machine Extensions, which provide hardware assist for CPU virtualization).

Enable VT-d (Intel Virtualization Technology for Directed I/O, which provides additional support for managing I/O virtualization).

Disable Secure Boot. This setting simplifies the steps for this example.

The names and locations of the BIOS settings depend on the target hardware and BIOS vendor and version.

Generate a Board Configuration File¶

Build the Board Inspector Debian package on the development computer:

Move to the development computer.

On the development computer, go to the

acrn-hypervisordirectory:cd ~/acrn-work/acrn-hypervisor

Build the Board Inspector Debian package:

debian/debian_build.sh clean && debian/debian_build.sh board_inspector

In a few seconds, the build generates a board_inspector Debian package in the parent (

~/acrn-work) directory.

Use the

scpcommand to copy the board inspector Debian package from your development computer to the/tmpdirectory on the target system. Replace10.0.0.200with the target system’s IP address you found earlier:scp ~/acrn-work/python3-acrn-board-inspector*.deb acrn@10.0.0.200:/tmp

Now that we’ve got the Board Inspector Debian package on the target system, install it there:

sudo apt install -y /tmp/python3-acrn-board-inspector*.deb

Reboot the target system:

sudo rebootRun the Board Inspector on the target system to generate the board configuration file. This example uses the parameter

my_boardas the file name. The Board Inspector can take a few minutes to scan your target system and create the board XML file with your target system’s information.cd ~/acrn-work sudo acrn-board-inspector my_board

Note

If you get an error that mentions Pstate and editing the GRUB configuration, reboot the system and run this command again.

Confirm that the board configuration file

my_board.xmlwas generated in the current directory:ls ./my_board.xmlFrom your development computer, use the

scpcommand to copy the board configuration file on your target system back to the~/acrn-workdirectory on your development computer. Replace10.0.0.200with the target system’s IP address you found earlier:scp acrn@10.0.0.200:~/acrn-work/my_board.xml ~/acrn-work/

Generate a Scenario Configuration File and Launch Script¶

In this step, you will download, install, and use the ACRN Configurator to generate a scenario configuration file and launch script.

A scenario configuration file is an XML file that holds the parameters of a specific ACRN configuration, such as the number of VMs that can be run, their attributes, and the resources they have access to.

A launch script is a shell script that is used to configure and create a post-launched User VM. Each User VM has its own launch script.

On the development computer, download and install the ACRN Configurator Debian package:

cd ~/acrn-work wget https://github.com/projectacrn/acrn-hypervisor/releases/download/v3.3/acrn-configurator-3.3.deb -P /tmp

If you already have a previous version of the acrn-configurator installed, you should first remove it:

sudo apt purge acrn-configurator

Then you can install this new version:

sudo apt install -y /tmp/acrn-configurator-3.3.deb

Launch the ACRN Configurator:

acrn-configurator

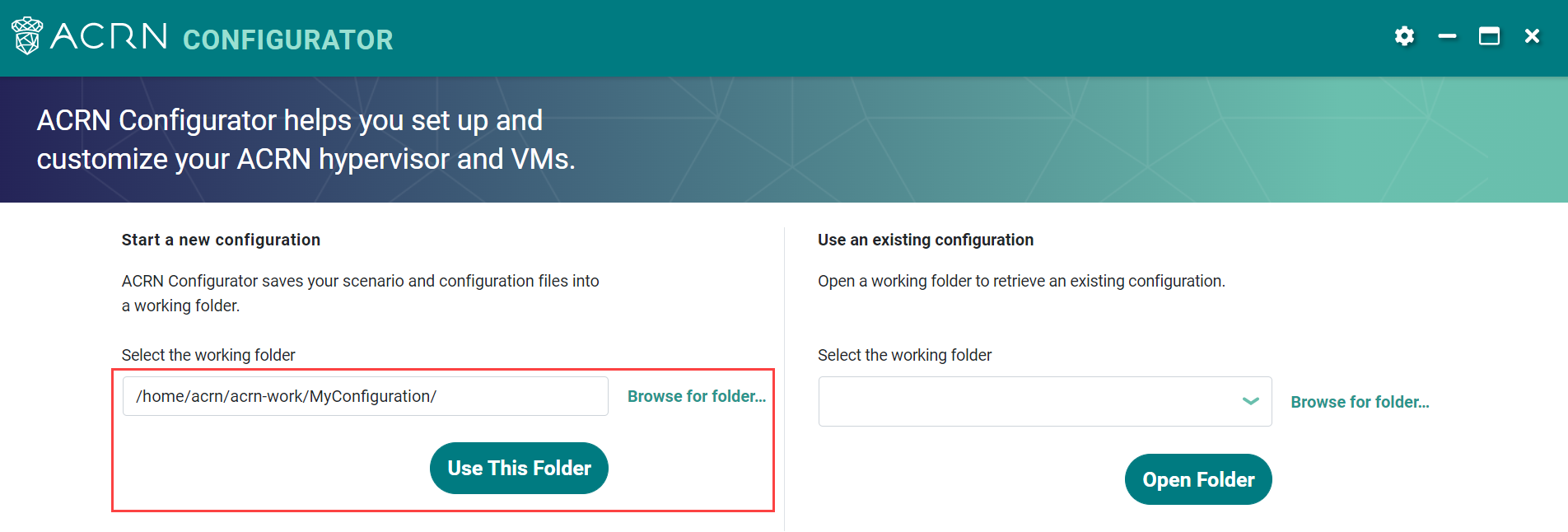

Under Start a new configuration, confirm that the working folder is

<path to>/acrn-work/MyConfiguration. Click Use This Folder.

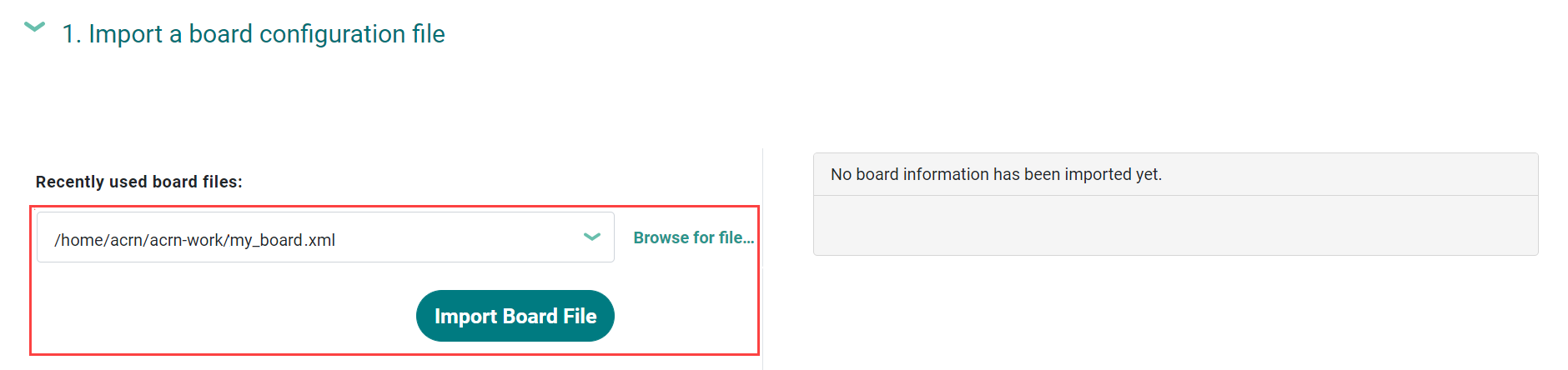

Import your board configuration file as follows:

In the 1. Import a board configuration file panel, click Browse for file.

Browse to

~/acrn-work/my_board.xmland click Open.Click Import Board File.

The ACRN Configurator makes a copy of your board file, changes the file extension to

.board.xml, and saves the file to the working folder asmy_board.board.xml.Create a new scenario as follows:

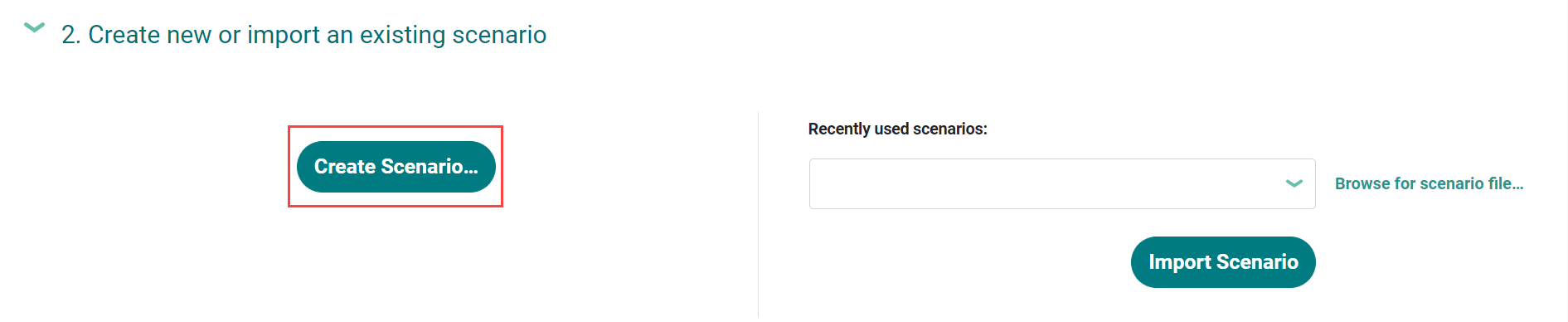

In the 2. Create new or import an existing scenario panel, click Create Scenario.

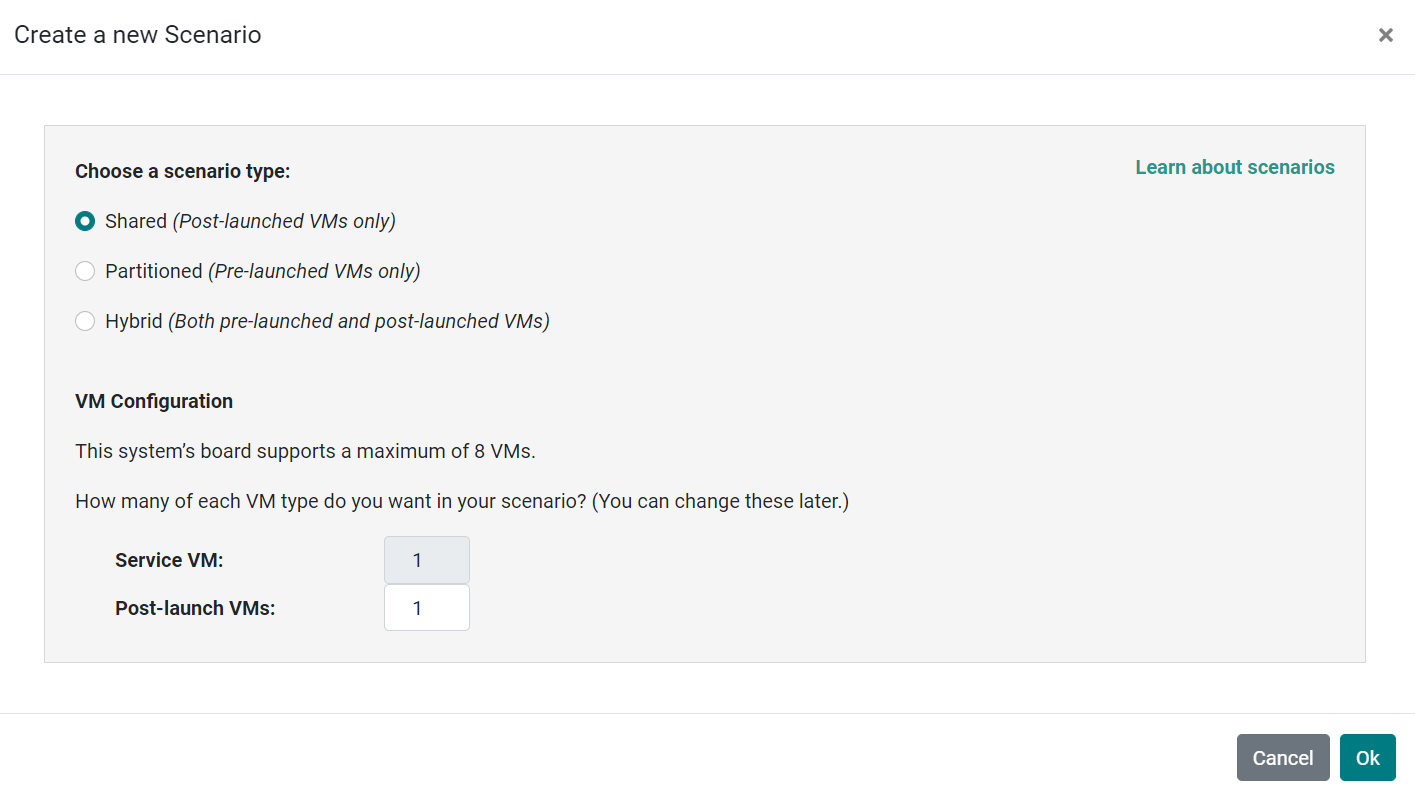

In the dialog box, confirm that Shared (Post-launched VMs only) is selected.

Confirm that one Service VM and one post-launched VM are selected.

Click Ok.

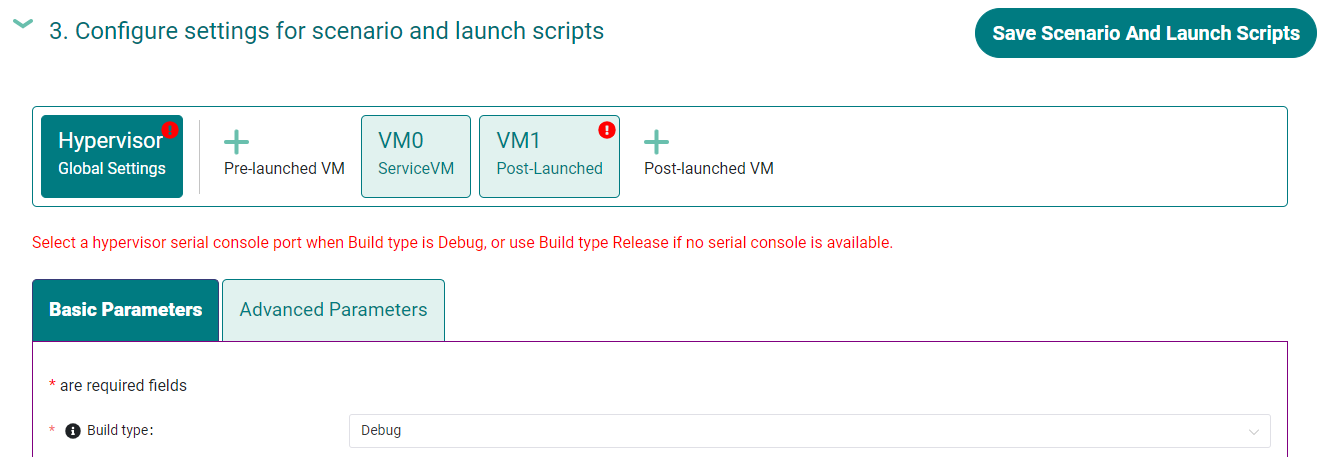

In the 3. Configure settings for scenario and launch scripts panel, the scenario’s configurable items appear. Feel free to look through all the available configuration settings. This is where you can change the settings to meet your application’s particular needs. But for now, you will update only a few settings for functional and educational purposes.

You may see some error messages from the Configurator, such as shown here:

The Configurator does consistency and validation checks when you load or save a scenario. Notice the Hypervisor and VM1 tabs both have an error icon, meaning there are issues with configuration options in two areas. Since the Hypervisor tab is currently highlighted, we’re seeing an issue we can resolve on the Hypervisor settings. Once we resolve all the errors and save the scenario (forcing a full validation of the schema again), these error indicators and messages will go away.

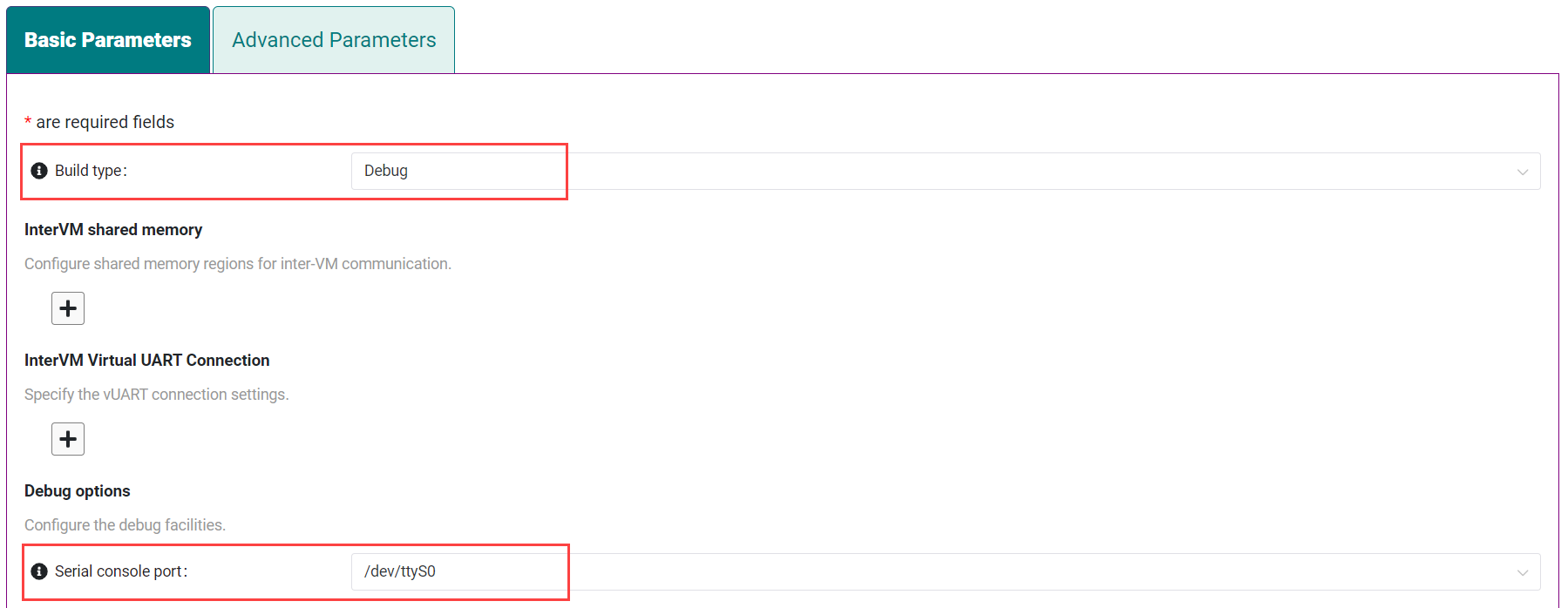

Click the Hypervisor Global Settings > Basic Parameters tab, select the

Debugbuild type, and select the serial console port (the example shows/dev/ttyS0, but yours may be different). If your board doesn’t have a serial console port, select theReleasebuild type. The Debug build type requires a serial console port (and is reporting an error because a serial console port hasn’t been configured yet).

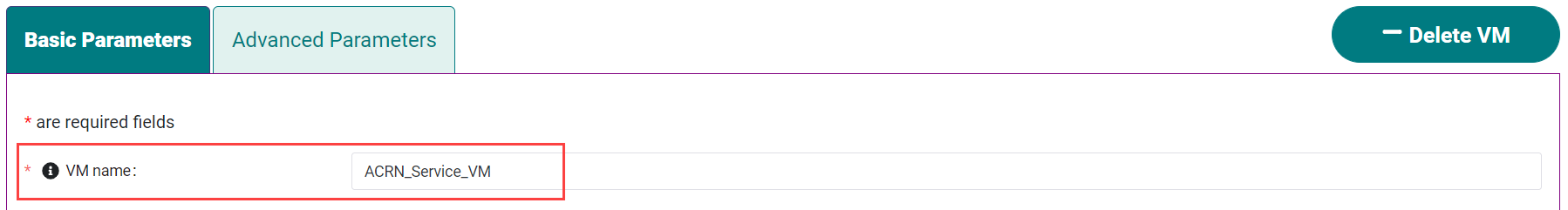

Click the VM0 ServiceVM > Basic Parameters tab and change the VM name to

ACRN_Service_VMfor this example.

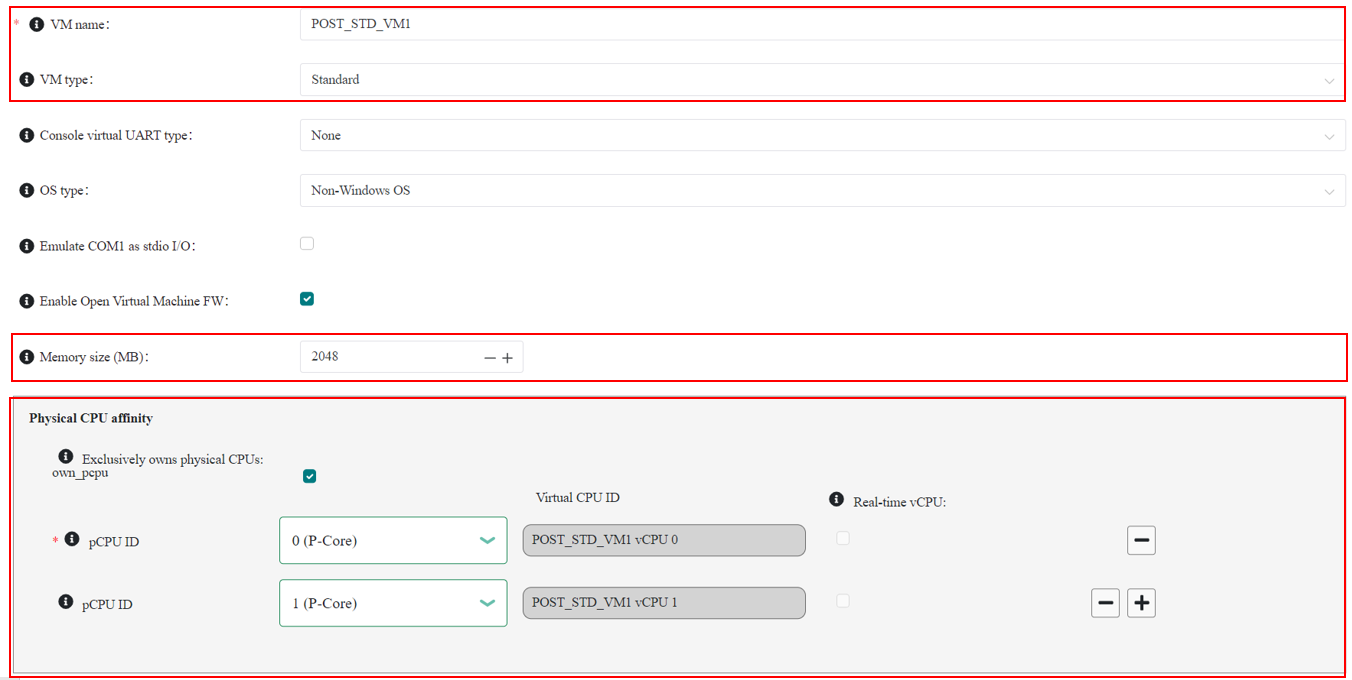

Configure the post-launched VM as follows:

Click the VM1 Post-launched > Basic Parameters tab and change the VM name to

POST_STD_VM1for this example.Confirm that the VM type is

Standard. In the previous step,STDin the VM name is short for Standard.Scroll down to Memory size (MB) and change the value to

2048. For this example, we will use Ubuntu 22.04 to boot the post-launched VM. Ubuntu 22.04 needs at least 2048 MB to boot.For Physical CPU affinity, select pCPU ID

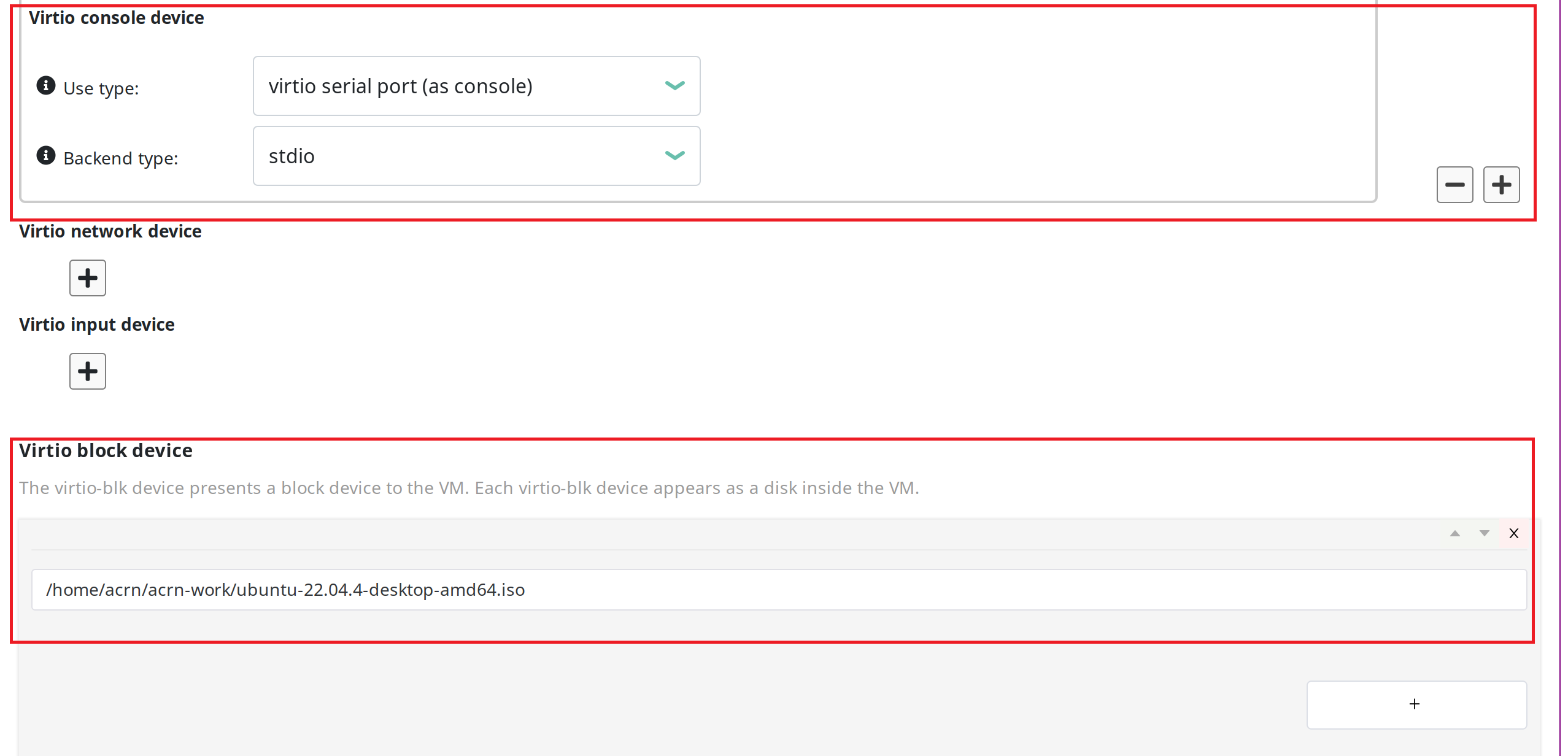

0, then click + and select pCPU ID1to affine (or pin) the VM to CPU cores 0 and 1. (That will resolve the missing physical CPU affinity assignment error.)For Virtio console device, click + to add a device and keep the default options.

For Virtio block device, click + and enter

/home/acrn/acrn-work/ubuntu-22.04.4-desktop-amd64.iso. This parameter specifies the VM’s OS image and its location on the target system. Later in this guide, you will save the ISO file to that directory. (If you used a different username when installing Ubuntu on the target system, here’s where you’ll need to change theacrnusername to the username you used.)

Scroll up to the top of the panel and click Save Scenario And Launch Scripts to generate the scenario configuration file and launch script.

Click the x in the upper-right corner to close the ACRN Configurator.

Confirm that the scenario configuration file

scenario.xmlappears in the working directory:ls ~/acrn-work/MyConfiguration/scenario.xml

Confirm that the launch script appears in the working directory:

ls ~/acrn-work/MyConfiguration/launch_user_vm_id1.sh

Build ACRN¶

On the development computer, build the ACRN hypervisor:

cd ~/acrn-work/acrn-hypervisor debian/debian_build.sh clean && debian/debian_build.sh -c ~/acrn-work/MyConfiguration

The build typically takes a few minutes. When done, the build generates several Debian packages in the parent (

~/acrn-work) directory:cd ../ ls *.deb acrnd_*.deb acrn-dev_*.deb acrn-devicemodel_*.deb acrn-doc_*.deb acrn-hypervisor_*.deb acrn-lifemngr_*.deb acrn-system_*.deb acrn-tools_*.deb grub-acrn_*.deb

These Debian packages contain the ACRN hypervisor and tools to ease installing ACRN on the target.

Build the ACRN kernel for the Service VM:

If you have built the ACRN kernel before, run the following command to remove all artifacts from the previous build. Otherwise, an error will occur during the build.

cd ~/acrn-work/acrn-kernel make distclean

Build the ACRN kernel:

cd ~/acrn-work/acrn-kernel cp kernel_config_service_vm .config make olddefconfig make -j $(nproc) deb-pkg

The kernel build can take 15 minutes or less on a fast computer, but could take two hours or more depending on the performance of your development computer. When done, the build generates four Debian packages in the directory above the build root directory:

cd .. ls *acrn-service-vm*.deb linux-headers-6.1.80-acrn-service-vm_6.1.80-acrn-service-vm-1_amd64.deb linux-image-6.1.80-acrn-service-vm_6.1.80-acrn-service-vm-1_amd64.deb linux-libc-dev_6.1.80-acrn-service-vm-1_amd64.deb

Use the

scpcommand to copy files from your development computer to the target system. Replace10.0.0.200with the target system’s IP address you found earlier:sudo scp ~/acrn-work/acrn*.deb \ ~/acrn-work/grub*.deb \ ~/acrn-work/*acrn-service-vm*.deb \ ~/acrn-work/MyConfiguration/launch_user_vm_id1.sh \ acrn@10.0.0.200:~/acrn-work

Install ACRN¶

On the target system, install the ACRN Debian package and ACRN kernel Debian packages using these commands:

cd ~/acrn-work cp ./acrn*.deb ./grub*.deb ./*acrn-service-vm*.deb /tmp sudo apt install /tmp/acrn*.deb /tmp/grub*.deb /tmp/*acrn-service-vm*.deb

Modify the GRUB menu display using

sudo vi /etc/default/grub, comment out the hidden style and changing the timeout to 5 seconds (leave other lines as they are), as shown:#GRUB_TIMEOUT_STYLE=hidden GRUB_TIMEOUT=5

and install the new GRUB menu using:

sudo update-grub

Reboot the system:

sudo rebootThe target system will reboot into the ACRN hypervisor and start the Ubuntu Service VM.

Confirm that you see the GRUB menu with “Ubuntu with ACRN hypervisor, with Linux 6.1.80-acrn-service-vm (ACRN 3.3)” entry. Select it and proceed to booting ACRN. (It may be auto-selected, in which case it will boot with this option automatically in 5 seconds.)

Example grub menu shown as below:

GNU GRUB version 2.04 ──────────────────────────────────────────────────────────────────────────────── Ubuntu Advanced options for Ubuntu Ubuntu-ACRN Board Inspector, with Linux 6.5.0-18-generic Ubuntu-ACRN Board Inspector, with Linux 6.1.80-acrn-service-vm Memory test (memtest86+x64.efi) Memory test (memtest86+x64.efi, serial console) Ubuntu with ACRN hypervisor, with Linux 6.5.0-18-generic (ACRN 3.3) *Ubuntu with ACRN hypervisor, with Linux 6.1.80-acrn-service-vm (ACRN 3.3) UEFI Firmware Settings

Run ACRN and the Service VM¶

The ACRN hypervisor boots the Ubuntu Service VM automatically.

On the target, log in to the Service VM using the

acrnusername and password you set up previously. (It will look like a normal graphical Ubuntu session.)Verify that the hypervisor is running by checking

dmesgin the Service VM:dmesg | grep -i hypervisor

You should see “Hypervisor detected: ACRN” in the output. Example output of a successful installation (yours may look slightly different):

[ 0.000000] Hypervisor detected: ACRNEnable and start the Service VM’s system daemon for managing network configurations, so the Device Model can create a bridge device (acrn-br0) that provides User VMs with wired network access:

Warning

The IP address of Service VM may change after executing the following command.

sudo cp /usr/share/doc/acrnd/examples/* /etc/systemd/network sudo systemctl enable --now systemd-networkd

Launch the User VM¶

On the target system, use the web browser to visit the official Ubuntu website and get the Ubuntu Desktop 22.04 LTS ISO image

ubuntu-22.04.4-desktop-amd64.isofor the User VM. (The same image you specified earlier in the ACRN Configurator UI.) Alternatively, instead of downloading it again, you could usescpto copy the ISO image file from the development system to the~/acrn-workdirectory on the target system.If you downloaded the ISO file on the target system, copy it from the Downloads directory to the

~/acrn-work/directory (the location we said in the ACRN Configurator for the scenario configuration for the VM), for example:cp ~/Downloads/ubuntu-22.04.4-desktop-amd64.iso ~/acrn-work

Launch the User VM:

sudo chmod +x ~/acrn-work/launch_user_vm_id1.sh sudo ~/acrn-work/launch_user_vm_id1.sh

It may take about a minute for the User VM to boot and start running the Ubuntu image. You will see a lot of output, then the console of the User VM will appear as follows:

Welcome to Ubuntu 22.04.4 LTS (GNU/Linux 6.5.0-18-generic x86_64) * Documentation: https://help.ubuntu.com * Management: https://landscape.canonical.com * Support: https://ubuntu.com/advantage Expanded Security Maintenance for Applications is not enabled. 0 updates can be applied immediately. Enable ESM Apps to receive additional future security updates. See https://ubuntu.com/esm or run: sudo pro status The list of available updates is more than a week old. To check for new updates run: sudo apt update The programs included with the Ubuntu system are free software; the exact distribution terms for each program are described in the individual files in /usr/share/doc/*/copyright. Ubuntu comes with ABSOLUTELY NO WARRANTY, to the extent permitted by applicable law. To run a command as administrator (user "root"), use "sudo <command>". See "man sudo_root" for details. ubuntu@ubuntu:~$

This User VM and the Service VM are running different Ubuntu images. Use this command to see that the User VM is running the downloaded Ubuntu image:

acrn@ubuntu:~$ uname -r 6.5.0-18-generic

Then open a new terminal window and use the command to see that the Service VM is running the

acrn-kernelService VM image:acrn@asus-MINIPC-PN64:~$ uname -r 6.1.80-acrn-service-vm

The User VM has launched successfully. You have completed this ACRN setup.

(Optional) To shut down the User VM, run this command in the terminal that is connected to the User VM:

sudo poweroff

Next Steps¶

Configuration and Development Overview describes the ACRN configuration process, with links to additional details.

A follow-on Sample Application User Guide tutorial shows how to configure, build, and run a more real-world sample application with a Real-time VM communicating with an HMI VM via inter-VM shared memory (IVSHMEM).